Tensor Flow

Tensors

de facto for representing the data in deep learning

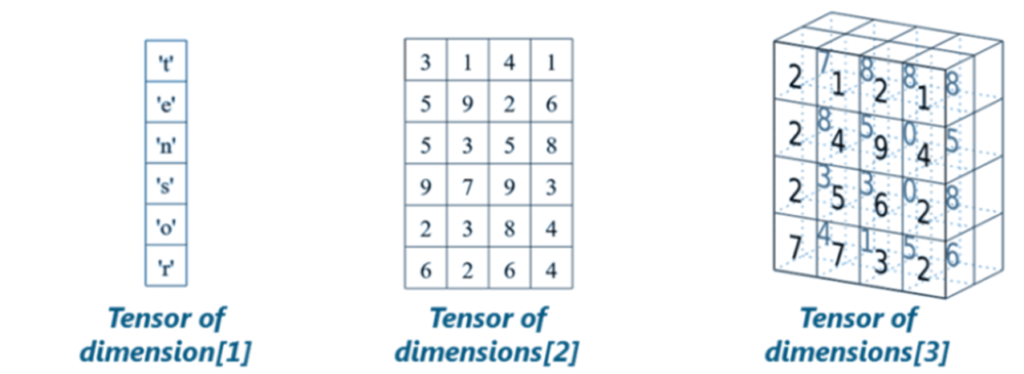

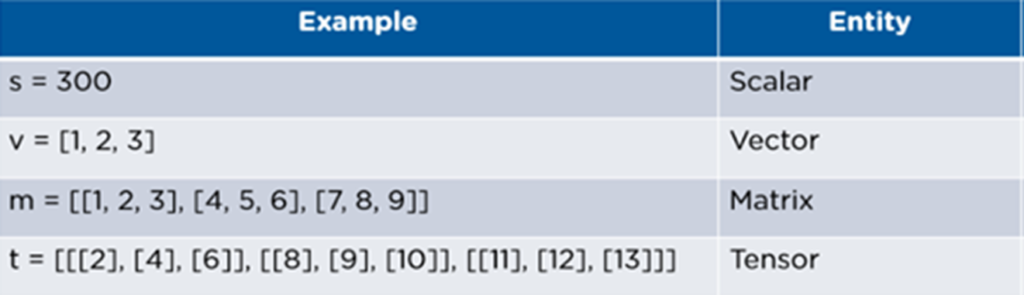

- Tensors are just multidimensional arrays, that allows you to represent data having higher dimensions.

- Tensor Flow is a library based on Python that provides different types of functionality for implementing Deep Learning Models

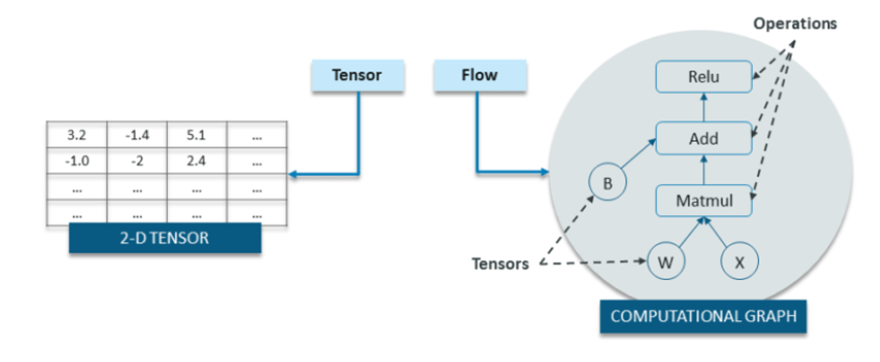

- Tensor Flow is made up of two terms – Tensor & Flow

Tensor Attributes

TensorFlow API

Basic Notations

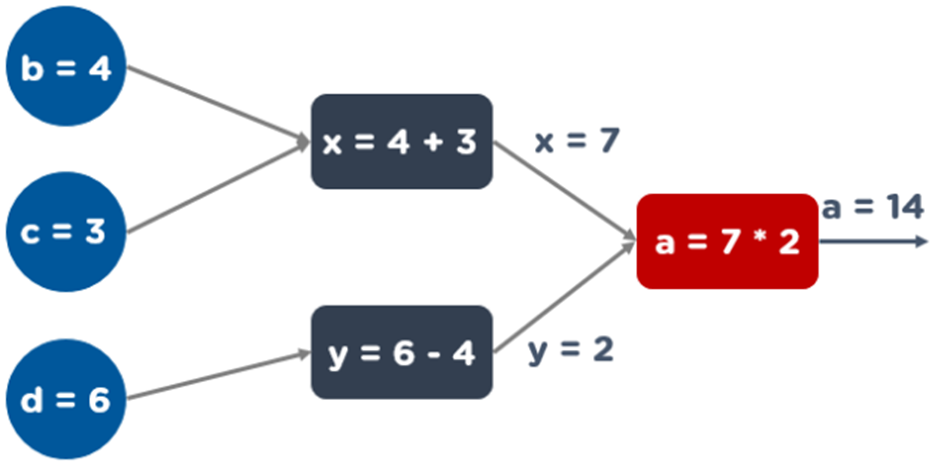

Node:

- A node represents an operation, possibly applied to some input and can generate an output that is passed on to other nodes

- Example: a = matmul(x,w).

- Here matmul() is a node

- Note: Nodes can be calculated by using session object

Edges / Tensor objects

- A graph has to be created before calling the session object

- The computed result can be passed on flow to another node while creating the graph

- These references, are called Edges / Tensor objects

Session()

- Session() helps in the execution of the Computational graph and controls the state of the tensorflow runtime

- Without Session, it is not possible to execute tensorflow programs

Draw the graph

a(b, c, d) = (b + c) * (d - 4)

x = (b + c)

y = (d - 4)

a = x * y

Determine Rank

Ranks

- Tensor ranks are the number of dimensions used to represent the data. For example:

Rank 0 – When there is only one element. We also call this as a scalar.

- Example: s = [2000]

Rank 1 – This basically refers to a one-dimensional array called a vector.

- Example: v = [10, 11, 12]

Rank 2 – This is traditionally known as a two-dimensional array or a matrix.

- Example: m = [1,2,3],[4,5,6]

Rank 3 – It refers to a multidimensional array, generally referred to as tensor.

- Example: t = [[[1],[2],[3]],[[4],[5],[6]],[[7],[8],[9]]]

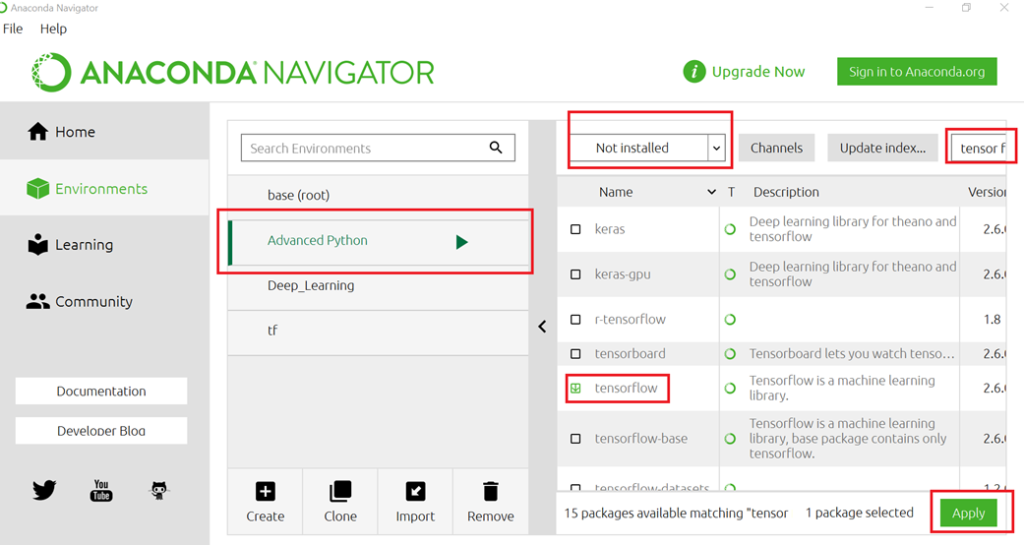

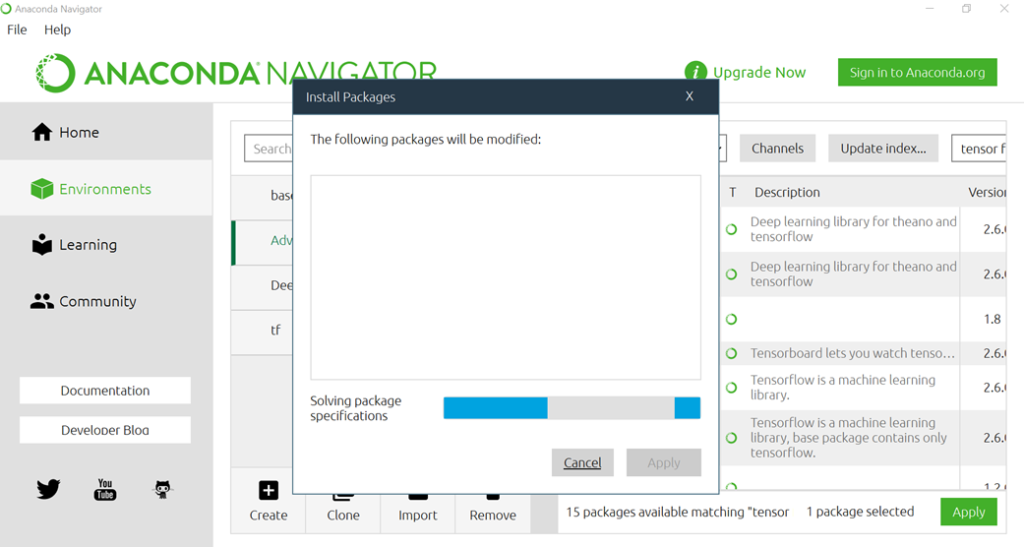

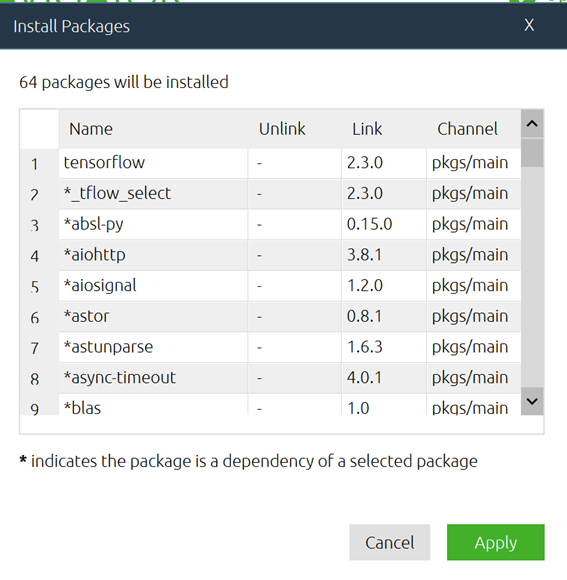

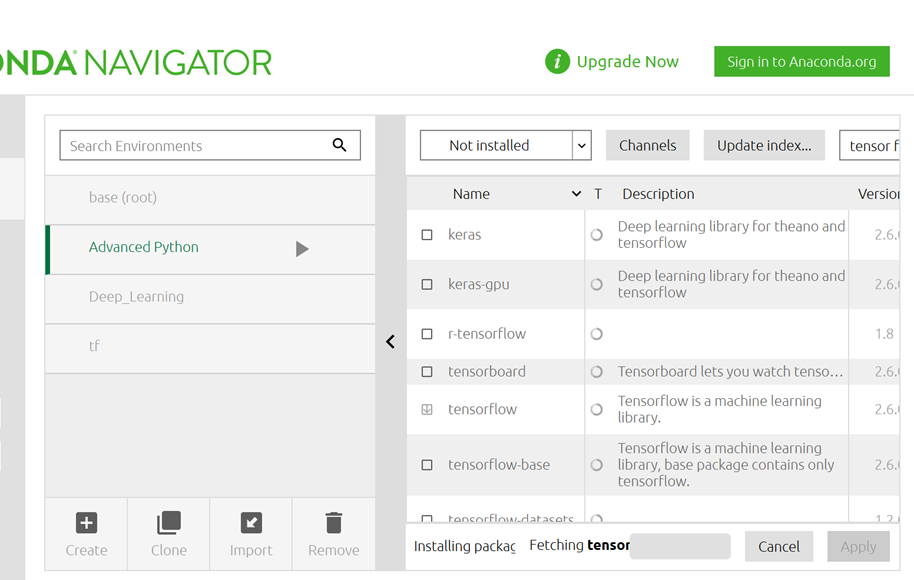

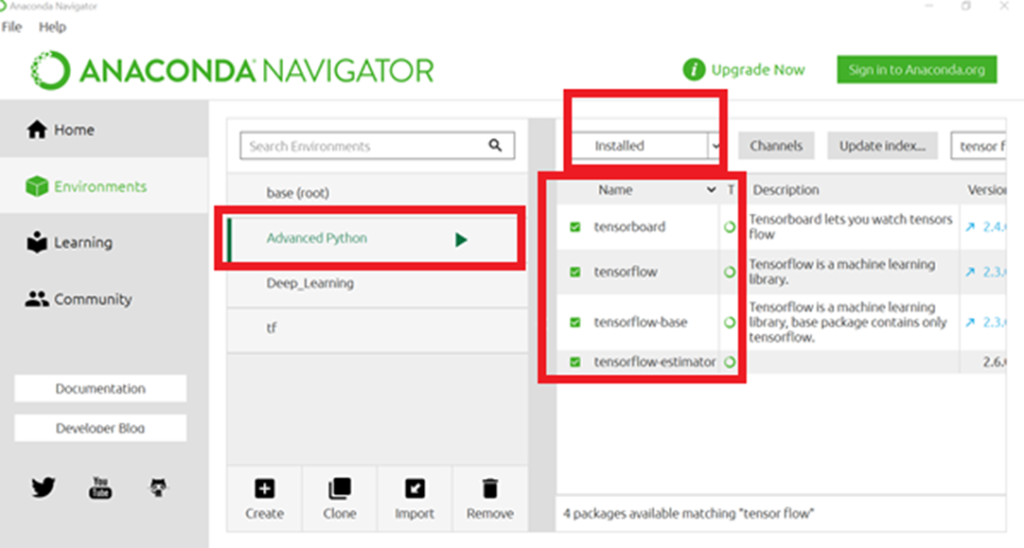

Installation without command Prompt

Step 1: Search the installation

Step 2: Installation of package

Step 3: Display of dependency package

Step 4: Installation status

Step 5: Completion of installation

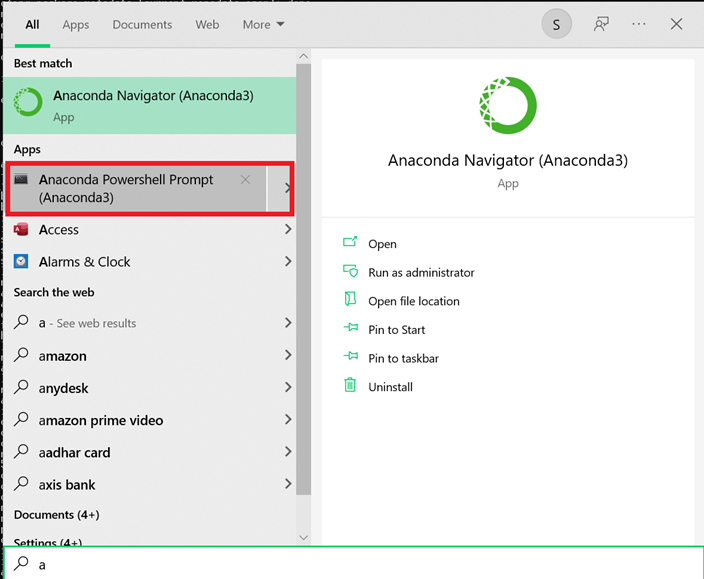

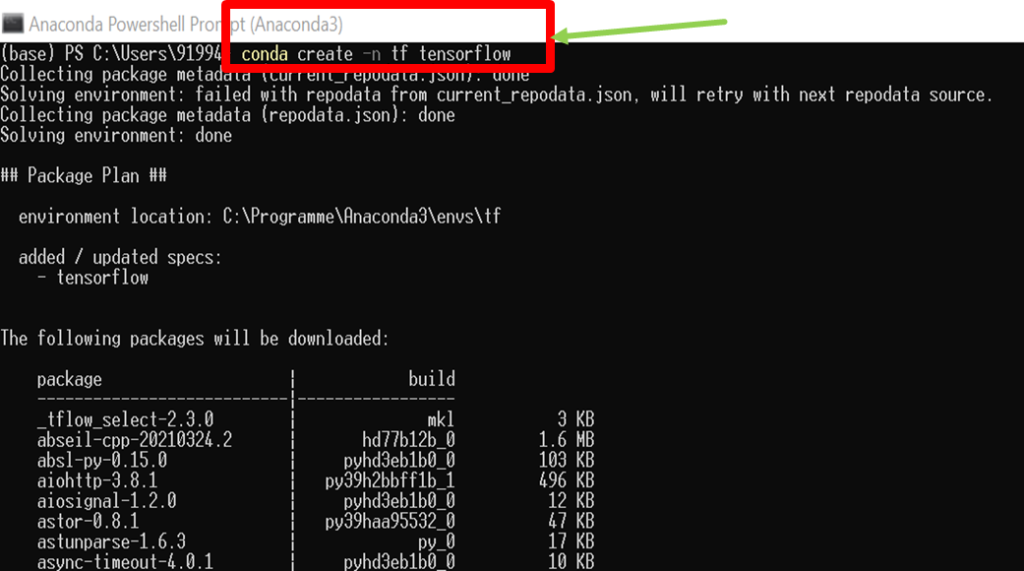

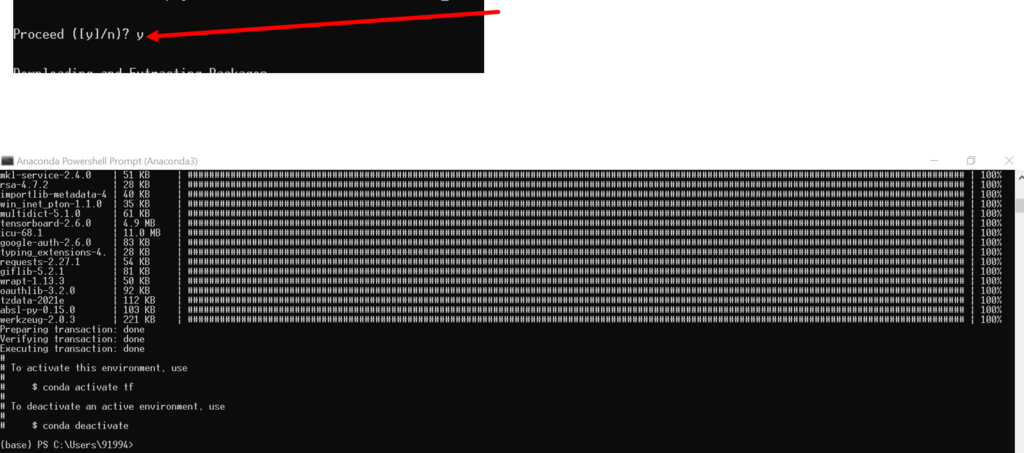

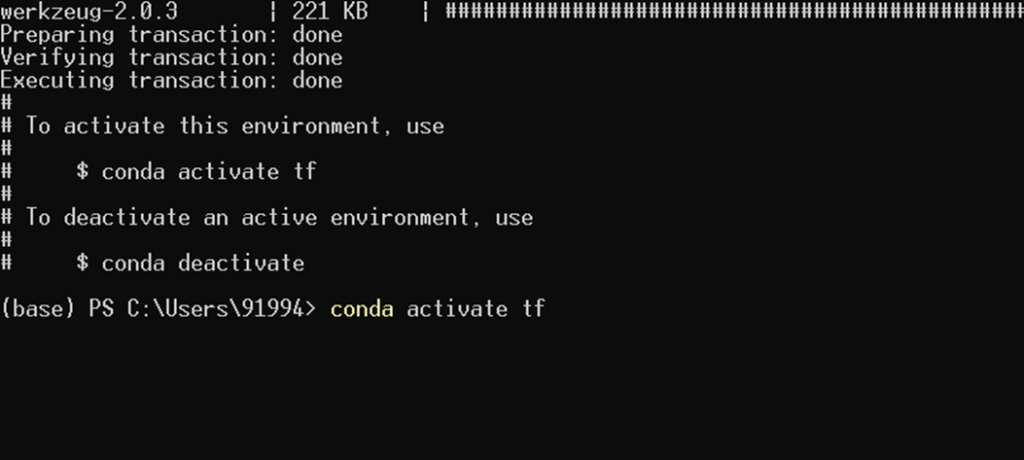

Installation with command Prompt

Step 1: In the start menu, select Anaconda Powershell Prompt (Anaconda 3)

Step 2: Type the command conda create –n tf tensorflow And press enter

Step 3: Give y

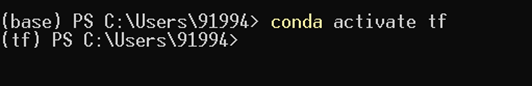

Step 4: Type conda activate tf and press enter

Step 5: Finally, the screen will look like this

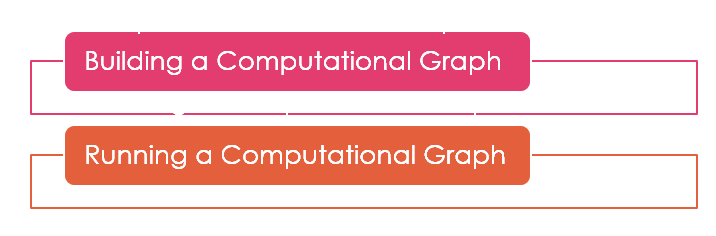

Writing a Tensor Flow Program

The process involves two steps:

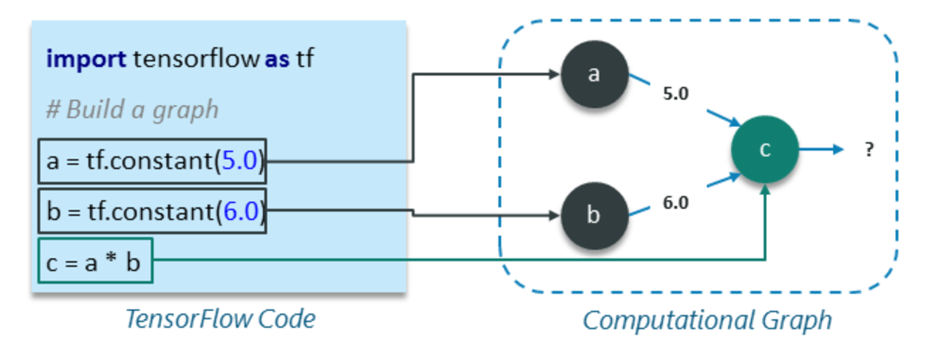

Building a Computational Graph

- A computational graph is a series of Tensor Flow operations arranged as nodes in the graph

- Each nodes take 0 or more tensors as input and produces a tensor as output

- Example: Computational Graph with three nodes a, b and c

Running a Computational Graph

- To get the output of node c, run the computational graph within a session

- Session places the graph operations onto devices, such as CPUs or GPUs and applies methods to execute them

- A session encapsulates the control and state of the TensorFlow runtime i.e. it stores the information about the order in which all the operations will be performed and passes the result of already computed operation to the next operation in the pipeline

Data types

Constants

Constants are used to create constant values

tf.constant(value,

dtype = None,

shape = None,

name=‘Const’

verify_shape = False)S = tf.constant(2, name=‘scalar’)

M = tf.constant ([ [1,2], [3,4] ], name = ‘matrix’)Creating constants

import tensorflow as tf

with tf.compat.v1.Session() as s:

#Build Computational Graph

a = tf.constant(5)

b = tf.constant(6)

c=tf.add(a,b)

#Run the graph using session object and store the output in a variable

result = s.run(c)

#Print the output of node

print("result=",result)

#Close the session to free up some resources

s.close()result= 11Variables

Variables are stateful nodes which output their current value. They can be saved and restored

Example

tf.get_variable( name, shape = None, dtype = None, initializer = None)S1 = tf.get_variable(name = ‘scalar1’, initializer = 2)

M1 = tf.get_variable(“matrix”, initializer = tf.constant( [0,1], [2,3])with tf.compat.v1.Session() as s:

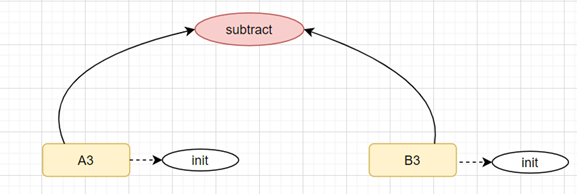

#create graph

a1 = tf.compat.v1.get_variable(name="A3", initializer = tf.constant([[0,1],[2,3]]))

b1 = tf.compat.v1.get_variable(name="B3", initializer = tf.constant([[4,5],[6,7]]))

c1 = tf.subtract(a1,b1,name = "subtract")

#Initialize variables

init_op = tf.compat.v1.global_variables_initializer()

#Run the graph

s.run(init_op)

print("Result = ", s.run(c1))

Result = [[-4 -4]

[-4 -4]]Placeholder

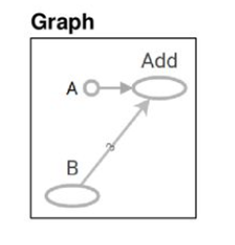

- Placeholder is a node whose value is fed in at execution time

- Assemble the graph without knowing the values needed for computation

- Later supply the data at execution time

tf.compat.v1.disable_eager_execution() # use this in order to use placeholder()

a = tf.constant([5,5,5],tf.float32, name = "A5")

b = tf.compat.v1.placeholder(tf.float32, shape=[3],name="B5")

c = tf.add(a,b,name = "Add")

with tf.compat.v1.Session() as s:

d = {b:[1,2,3]}

print(s.run(c,feed_dict=d))

[6. 7. 8.]Aggregate Functions

import numpy as np

ten_obj = tf.constant( [ [1,2,3], [-1,0,4], [-4,3,100] ] )

with tf.compat.v1.Session() as s:

#Initialize variables

init_op = tf.compat.v1.global_variables_initializer()

#Run the graph

s.run(init_op)

sum1 = s.run(tf.math.reduce_sum(ten_obj))

mean1 = s.run(tf.math.reduce_mean(ten_obj))

min1 = s.run(tf.math.reduce_min(ten_obj))

max1 = s.run(tf.math.reduce_max(ten_obj))

print('Min:', min1)

print('Max:', max1)

print("sum=",sum1)

print("Mean=",mean1)Min: -4

Max: 100

sum= 108

Mean= 12Tensor Flow Built in Functions

tf.zeros

- Functions for the matrix with all zeros

tf.ones

- Functions for the matrix with all ones

tf.negative

- Function for changing all the values to negative

Mathematical Operations

tf.add(a, b)

tf.subtract(a, b)

tf.multiply(a, b)tf.div(a, b)

tf.pow(a, b)

tf.exp(a)tf.sqrt(a)Views: 3